The primary purpose of tracking is to update the visual display based on the viewers head position and orientation. Instead of tracking the viewer's eyes directly, we track the position and orientation of the user's head. From this we determine the position and orientation of the two eyes.

We may also be tracking the user's hand(s), fingers, legs or other interface devices.

Want tracking to be as 'invisible' as possible to the user.

Want the user to be able to move freely with few encumbrances

Want to be able to have multiple 'guests' nearby

Want to track as many objects as necessary

Want to have minimal delay between movement of an object and the detection of the objects new position / orientation (< 50 msec total)

Want tracking to be accurate (1mm and 1 degree)

In order to interact with the virtual world beyond

moving through it, we probably need to track at least one of the

user's hands as well. Tracking the position and orientation of

the hand allows the user to interact with the virtual world or

other users as though the user is wearing mittens with no fine

control of the fingers. When thinking about how the user

interacts with the worlds that you are building, think about the

kinds of actions a person can do while wearing mittens.

![]()

large transmitter and one or more small sensors.

transmitter emits an electromagnetic field.

sensors report the strength of that field at their location to a computer

sensors can be polled specifically by the computer or transmit continuously.

uses:

advantages are:

disadvantages are:

examples:

rigid structures with multiple joints

one end is fixed, the other is the object being tracked

could be tracking users head, or their hand

physically measure the rotation about joints in the armature to compute position and orientation

structure is counter-weighted - movements are slow and smooth

Knowing the length of each joint and the rotation at each joint, location and orientation of the end point is easy to compute.

uses:

advantages are:

disadvantages are:

small transmitter and one medium sized sensor

each transmitter emits ultrasonic pulses which are received by microphones on the sensor (usually arranged in a triangle)

as the pulses will reach the different microphones at slightly different times, the position and orientation of the transmitter can be determined

uses:

advantages are:

disadvantages are:

examples:

LEDs or reflective materials are placed on the object to be tracked

video cameras at fixed locations capture the scene

image processing techniques are used to locate the object

With fast enough processing you can also use

computer vision techniques to isolate a head in the image and

then use the head to find the position of the eyes

A very good optical tracking system is the A. R. T.

system from Germany - http://www.ar-tracking.de/

In CAVE2 we use a Vicon camera

tracking system

self-contained

gyroscopes / accelerometers used

knowing where the object was and its change in position / orientation the device and 'know' where it now is

tend to work for limited periods of time then drift.

Combinations

Intersense uses a combination on Acoustic and Inertial. Inertial can deal with fast movements and acoustic keeps the inertial from drifting

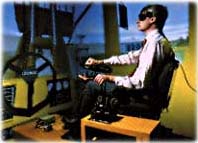

Rather than looking for a generic solution, specialized VR applications are usually better served using specialized tracking hardware. These pieces of specialized hardware generally replace tracking of the user with an input device that handles navigation

For Caterpillar's testing of their cab designs they place the actual cab hardware into the CAVE so the driver controls the virtual loader in the same way the actual loader would be controlled. The position of the gear shift, the pedals, and the steering wheel determine the location of the user in the virtual space.

A treadmill can be used to allow walking and running within a confined space. More sophisticated multilayer treadmills or spheres allow motion in a plane.

A bicycle with handlebars allows the user to pedal and turn, driving through a virtual environment

a bit more about latency

Accuracy needs to come from the tracker manufacturers. Latency is partly our fault.

Latency is the sum of:

another important point about latency is the importance of consistent latency. If the latency isn't too bad, people will get used to it, but its very annoying if the latency isn't consistent - jitter..

How many sensors is enough?

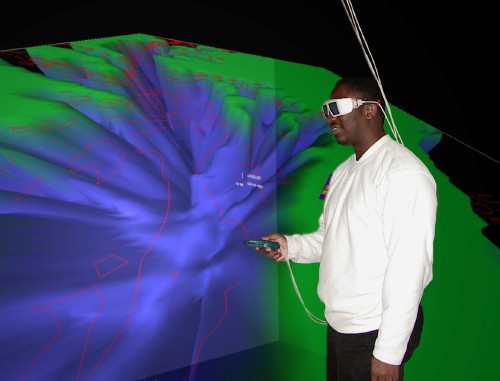

Tracking the head and hand is often enough for

working with remote people as avatars.

![]()

A user putting on sensors and another user dancing with 'the thing growing' at SIGGRAPH 98 in Orlando. This application tracked the head, both hands and the lower back.

Before next

class please read the following paper:

Interface and

Interaction

For Tuesday please read

the following paper: