There are two basic parts to doing CAVE video recording: 1. getting video out of the computer, and 2.configuring your CAVE application. They are inter-related in that the method you choose to get video out will determine some of the CAVE configuration, and contrariwise, a specific configuration that you wish to use may determine how you must set up the hardware.

Some general 'styles' of recording which can be done are:

On Reality Engines, the command /usr/sbin/vout controls the area of the screen which is output by the encoder. On IRs, you must use ircombine to control the encoder; the encoder is treated as a separate channel, but it uses some of the same circuitry as channel 1, and so the two cannot be used simultaneously.

The video from the encoder will not be as high quality as a component signal produced by one of the other methods below, but this is by far the easiest method of getting video out. If you don't need broadcast-quality material, the encoder is a good choice.

The composite and S-video connectors on the back of an Onyx/IR are shown here:

When using Sirius on an IR, you should also make sure to define a Sirius channel in the current video combination (created with ircombine), or the video daemon will have problems starting.

My experience with the DIVO so far is practically nil, so I won't attempt to document it further.

/usr/gfx/setmon -n NTSCwill switch the display to an NTSC video format. Note that this will still be an RGB signal, however, and so must generally be converted by some other video hardware before it is fed to a recording deck.

On an IR, you will need to create a combination with ircombine that loads the appropriate channel with the NTSC video format (vfo).

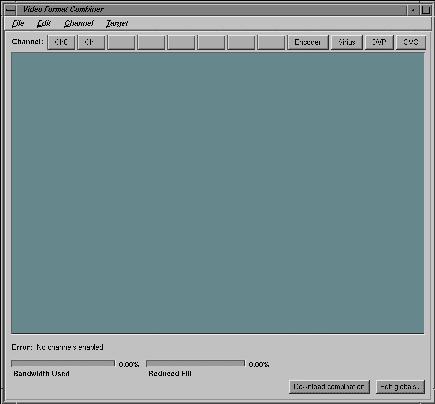

When you run ircombine, you will see this interface:

To choose the format for a channel, click that channel's button. A file

browser will pop up, showing the vfo's (video formats) that are available.

Their names normally take the form

widthxheight_frequency; some format names have suffixes,

such as "s" for stereo. For example, 1280x1024_60 is

a 1280 x 1024 channel at 60Hz; 1024x768_96s is a 1024 x 768 channel at

96Hz, stereo; 646x485_30i is an NTSC style format - 646 x 485 at 30Hz,

interlaced. Once you have selected the format, a blue rectangle will appear

in the central window, showing the portion of the framebuffer covered

by the channel. You can move this area around using the mouse, for instance

if you want to tile multiple channels.

If you are going to use the built-in encoder, click the 'Encoder' button.

The channel rectangle will immediately appear, as there is no choice of

what video format to use. Position the channel where you want it, relative

to the other channel(s) of the display. Remember that you cannot use the

encoder and channel 1 simultaneously, so channel 1 must remain undefined

in this case.

If you are going to use the Sirius option, click on the 'Sirius' button

(it will be greyed-out if ircombine does not detect any Sirius board on

the pipe that you are configuring). The channel rectangle will appear

immediately, as with the encoder. videoout will allow you to

control what part of the screen is output by the Sirius, so the channel's

location in ircombine is not important.

If you are going to use a monitor output channel, assign an NTSC format

(e.g. 646x485_30i) to the channel that is connected to your video recorder.

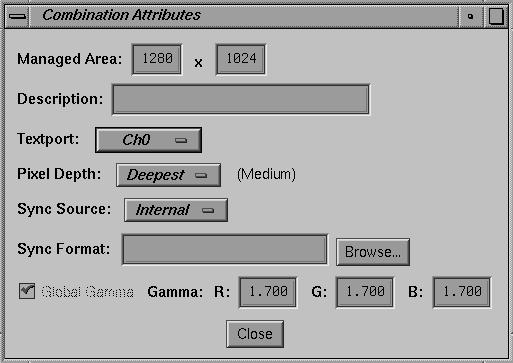

By default, ircombine uses a 1280x1024 managed area for the graphics

pipe. If you want to have multiple channels which don't overlap, you

may need a larger area. In this case, use the "Edit globals..." button

to modify the managed area. You will get the following popup:

Some other things to be aware of:

The wall that will be used for the video output must be configured so that

its window matches the section of frame buffer that is being recorded,

which depends on which output method you're using

(above). An NTSC video image is roughly 640x480

pixels; the exact size depends on the hardware - for instance, the Sirius

videoout window is 648x486. Once you know the size and location of

the area that will be recorded, use this information in your CAVE configuration

file for the output wall's window geometry. For example:

The simplest HMD-style display to use is the simulator wall.

ircombine

The program /usr/gfx/ircombine is used to create new combinations

of video formats for Infinite Reality systems. The combinations can then

be loaded with /usr/gfx/setmon (or directly from ircombine).

ircombine requires root access to run; setmon does not require root, except

when changing the default combination stored in EEPROM.

A video combination consists of one or more channels. Channels correspond

to the physical monitor outputs on the IR, plus the NTSC encoder and the

Sirius. The buttons along the top of the ircombine interface (Ch0, Ch1, ...)

are used to select and define the channels.

Change the two numbers on the top line to the size you want.

(If you want the pipe to be genlocked to an external video source, use

the "Sync Source" and "Sync Format" options in this popup to set that.)

CAVE Configuration

There are many CAVE configuration options which are relevant to making

video recordings. The most important are those that define the graphics

display configuration; however, in some cases you may wish to modify the

projection or tracking options as well.

The display

Unless you are simply recording a full-screen dump of single CAVE wall

or the IDesk using the Sirius option, you will want to define a separate

"wall" for your video output. This wall will be in addition to the

regular walls that are displaying in the CAVE or on the IDesk, or possibly

in place of one CAVE wall.

WallDisplay simulator :0.0 648x486+0+0

puts an NTSC sized simulator display in the lower left corner of the screen.

Remember that in the CAVE config, Y offsets are measured from the bottom of

the screen to the bottom of the window; in X windows, Y offsets are

from the top of the screen to the top of the window (the geometry argument

for videoout uses the X windows orientation). So, a command for

recording the above window would be:

/usr/sbin/videoout -f -nocontrol -geometry 648x486+0+538

The projection

In the configuration file, a CAVE wall can be defined as an "HMD" or "wall"

projection type, using the ProjectionData option. The CAVE and IDesk walls

are of the "wall" type, but when head-tracking is active, recording this

sort of projection on video will tend to look odd - objects shear and the

field of view changes as the user moves around. So for the video channel

we typically use an "HMD" projection. Of course, having a different type of

projection than what the user sees in the CAVE can sometimes make it difficult

to precisely frame a view of a scene; in cases where you want to record exactly

the view that appears on a CAVE wall, a "wall" projection should be used (often

with head tracking disabled - see below).

Tracking

In some cases, such as when you a "wall" rather than HMD projection, you will want

to disable head tracking, in order to keep the camera view steady. To

disable tracking entirely, you can simply use the configuration option:

TrackerType none

However, this will also disable wand tracking, which may make interaction difficult.

To only disable head tracking, use

ActiveSensors 1

which tells the CAVElib to only update sensor number 1 (the wand); if you have more

than one wand sensor, remember to include them as well on the ActiveSensors line.

Last modified 8 June 1999.

Dave Pape, pape@evl.uic.edu