Figure 2. Third-person view; a close-up of the user in the CAVE, and a distant view of the CAVE in the virtual world.

One of the greatest limitations on applications development is the lack of availability of actual VR equipment. While it is not uncommon for research labs to have one, or more, computer workstations per person, typically even the most advanced labs have no more than a handful of VR systems. This is primarily due to the cost of the systems, which can sometimes be much higher than that of an ordinary graphics workstation. Another impediment is the space requirement; a relatively large, open area is needed for tracked users to move about. Until VR has proven itself indispensable to everyday research, few organizations will be willing to invest heavily in the systems. Access problems were even greater during preparation for the Supercomputing '95 GII Testbed event; many researchers were located at remote sites without direct access to the VR hardware that would be used at the Supercomputing conference (the CAVE, ImmersaDesk, and NII/Wall). This limited access can make development very difficult if the equipment is always needed to test applications. When much of the creation and testing of applications can be done on ordinary workstations, however, development will go faster, and the VR hardware will be available more often for actual work, rather than for debugging code.

Another issue is the fact that current VR systems are not good development systems. Ideally, one would like to be able to do all of one's programming and debugging from within the virtual environment. However, this is not generally practical yet. Most developers would prefer to use a standard windowed, graphical desktop environment while working on applications. Rather than having to constantly shift between VR and workstation environments, we would like to be able to test VR applications on the normal workstation console.

We have created a software simulator for VR development; it simulates various special features of VR systems with an interface that can be run on an ordinary workstation. The simulator is implemented as part of the CAVE library, the programming library originally written to support the CAVE hardware [1]. The simulator can, however, be used to develop applications for a number of VR systems, including CAVEs, ImmersaDesks, and head-coupled displays. The library itself has been designed so that the use of the simulator or any supported hardware is entirely transparent to application code.

The input part takes numerous forms in different VR systems, but viewed abstractly, it can be treated as just two sources of specific data - three dimensional tracking data, and control device data. Three dimensional tracking can be done by electromagnetic, acoustic, or mechanical systems; in the end, they merely report the position and orientation of the user's head and other tracked objects (typically the user's hand). In the simulator, we maintain head and hand position and orientation data. The user can move and rotate these simulated tracking sensors in the virtual space with keyboard and mouse controls. In particular, we find that using the mouse for the simulated hand works well, as it is a fairly natural mapping between the two interaction paradigms, even though the mouse is limited to two degrees of freedom at a time. In current VR systems, there is a limit to the area in which the tracked user can move, being constrained by such things as the size of a CAVE, the length of head-mounted display (HMD) cables, or maximum range of the tracking hardware itself. To approximate this, the simulated tracked positions are restricted to remain within a configurable finite area.

Control devices include buttons on a wand or 3D mouse, joysticks, and data gloves. The wand normally used in the CAVE has a set of buttons and a joystick; in the simulator, we use the mouse buttons for the wand buttons, and use the mouse position in place of the joystick (when not using it for hand movement). A data glove can be approximated using keyboard controls, although that may not be the most natural interface, depending on the application. Other input methods, such as a dial box, if the workstation is equipped with one, or a Motif based widget interface, may be used to simulate arbitrary control devices. These approaches remain to be more fully explored in the CAVE simulator.

A fully immersive display is not possible on a normal CRT monitor, and moreover, as described above, we want access to a standard desktop working environment when developing an application. Hence, in the simulator, the display is in an ordinary X window like that of a non-VR application. The simulated display includes a number of special features to accurately represent the final system for which an application is being developed; most of these features are configurable, or can be toggled on and off at run time.

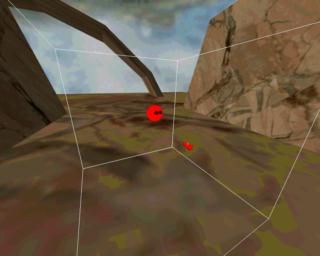

In its default mode, the simulator window presents a perspective view of the virtual environment, from the viewpoint of the simulated user's head. This approximates what the user would see when using the actual VR system (Figure 1). As different VR display technologies have different fields of view, the FOV of the simulated display can be configured to match that of the target hardware. In the case of a CAVE or ImmersaDesk, the user's view is limited not so much by a specific FOV of the hardware as by the area covered by the projection displays. To show this restriction, the simulator can black out the parts of the virtual world where there are no display screens. The simulator display also includes an option to use the same off-axis perspective projection as would be used when the scene is viewed in a CAVE. Although this does not produce a properly realistic display in the desktop environment, it can be important when testing applications whose rendering is strongly affected by the exact projection used.

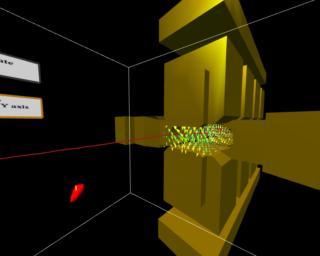

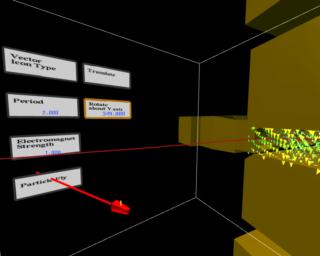

Another display mode available in the simulator is a third-person viewpoint (Figure 2). In this mode the viewpoint is separate from the simulated user's position, allowing one to see the user in the virtual world. This view is strictly a development tool; it is useful for observing how the user interacts with the world, and for judging sizes and distances relative to the user.

The CAVE, and related systems, use LCD shutter glasses and special stereoscopic video modes of the Silicon Graphics hardware to display stereo images. Workstations that support these special video and rendering modes can also display the simulator views in stereo, providing a closer match to the display of the VR system.

Another special feature of the simulator display is the automatic addition, by the simulator itself, of objects to the rendered scene. These include an outline of the CAVE, and representations of the wand and user's head. A wireframe cube outline shows the boundaries of the CAVE structure; this indicates the area to which the simulated user's movement is restricted, and is useful for seeing whether objects in the environment can actually be reached. A simplified representation of the wand shows the position of the simulated tracked controller. In non-see-through HMDs, the applications themselves must render an icon of the wand or glove, but this is not normally required in other systems. Hence the wand representation must be added when using the simulator. Last, in the third-person view, a head is rendered at the position of the simulated user, to show the user in relation to the virtual environment.

Our sound system uses a Silicon Graphics Indy workstation and a MIDI controlled amplifier system; the software runs on any SGI machine with audio hardware. When working with the simulator, it can be used with just the workstation audio to hear a non-spatialized version of the application sound.

Another important aspect of a VR system, beyond the basic input, output, and computation, is the synchronization of all these parts. Most of this synchronization is done through standard Unix inter-process communication tools. As long as the simulator is implemented in the same multiprocessed manner as the actual VR system, simulating the IPC is not an issue. However, some of our VR systems use more than one computer, to drive multiple displays, for instance. The computers are connected with high-speed, low-latency network hardware, such as Scramnet reflective memory, which is used to synchronize the displays and to share tracking and application data. We want to enable application developers to reproduce this environment in order to test any application-specific communication between the machines. The necessary high-performance network hardware is not available on most development workstations, and so must be simulated. This is done by running multiple, independent copies of an application on a single machine, and communicating between the copies using shared memory in place of networking.

Separate processes handle each of the major aspects of an application. One process takes care of reading the tracking and input devices, one or more processes perform the application's computations, and one or more processes, depending on the number of displays, perform the rendering. The different processes communicate through shared memory. The tracking/input process is normally handled entirely by CAVE library code; the data received from the devices is stored in a standard format in shared memory, as a list of tracked sensors' positions and orientations and the states of a set of buttons and valuators. The specific types of hardware that it should read are defined at run-time in a configuration file. As a result, application code does not need to call any device-specific functions; it merely reads the data in shared memory, without having to know exactly what hardware is being used. Under this system, the keyboard and mouse controls of the simulator are treated as just another selectable tracking device that returns data in the same format as the others. Hence, an application can switch between the simulated tracking and the real tracking of the VR system without any coding changes.

Although the actual rendering is done by application code, the display processes are under the control of the library; the application code is executed via callback functions. By controlling the processes' main loop, the CAVE library code itself is able to synchronize multiple displays and perform any special actions required for different display devices. As with the tracking, the hardware being used is defined in a run-time configuration file. The library then computes the correct viewing projection for the given display and current tracking data; it can do this for a CAVE, a HMD, or the simulator, transparent to the application. The callback approach also allows the simulator display to automatically add the CAVE outline, wand, and user's head representations to the virtual environment being rendered.

Each of the parts of the system - the tracking, controller input, and display - is implemented, and simulated, as a distinct unit of the entire CAVE library. This allows us to configure a system that combines pieces of the simulator with actual VR hardware. For example, if a three dimensional tracking system is available, it can be used with the simulator in a manner similar to a fishbowl VR approach, as in [4]. Furthermore, the individual units can be used separately from the overall library to support other programming toolkits. IRIS Performer [5] applications and SDSC's WebView (based on OpenInventor) have been developed for and run in CAVEs and on ImmersaDesks using this method.

2. P. A. Appino, J. B. Lewis, L. Koved, D. T. Ling, C. F. Codella. An Architecture for Virtual Worlds. Presence: Teleoperators and Virtual Environments. Vol. 1, Number 1. Winter 1992.

3. C. Shaw, M. Green, J. Liang, Y. Sun. Decoupled Simulation in Virtual Reality with the MR Toolkit. ACM Transactions on Information Systems, 11, 3, July 1993.

4. M. Deering. High Resolution Virtual Reality. ACM SIGGRAPH '92 Proceedings. Chicago, IL, 1992.

5. J. Rohlf, J. Helman. IRIS Performer: A High Performance Multiprocessing Toolkit for Real-Time 3D Graphics. Proceedings of SIGGRAPH 1994 (Orlando, Florida, July 24-29, 1994). pp. 381-394.

6. J. Goldman, T. Roy. Cosmic Worm. IEEE Computer Graphics & Applications. July 1994.