| TeraVision A scalable, distributed, high-resolution video streaming system |

||

|

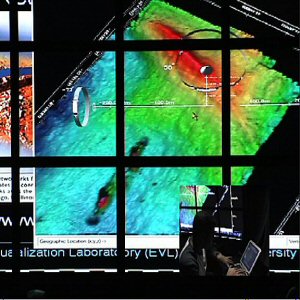

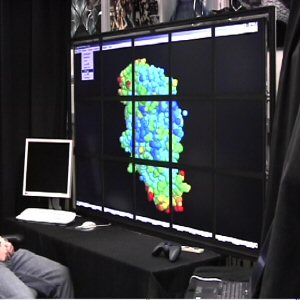

Picture of TeraVision streaming the output of a visualization tool on the tiled-display. Scientist using a 6k x 3k tiled display to view visualization rendered on his laptop. Visualization of information heavy 3D structures

|

What is TeraVision ? TeraVision is a system used for streaming displays between computers, at high-resolutions and high frame rates. The system is targeted for scientific visualization applications where the size of video streams produced by the applications is of the order of several gigabits per second. Due to the size of data to be streamed, the system is optimized to use the new optical gigabit networks efficiently. So what's unique about it ? Most of the video streaming systems out there are not designed to handle large data. Moreover for typical scientific visualization applications, the machines generating the visualization have heavily loaded CPUs and resources. In other words, there are very little resources left to do network streaming. For e.g. a Pentium 4 system @ 2GHz uses up 100% of the CPU while trying to push UDP network data at 1Gbps. So essentially we run up against a wall where we cannot use the machines, which are used for rendering, also for pushing high-res video streams. TeraVision uses a unique approach for distributing the load of network streaming to dedicated machines. A typical TeraVision box contains some form of capture hardware to take in a video input. The video input typically is the VGA/DVI out of the source machine. The server digitizes the video, compresses it and pushes it out through the network. The following diagram illustrates this approach.

But I have my own special home-grown capture hardware !! TeraVision was designed keeping the specific needs of the scientific communities in mind. Its designed to provide a framework in which users can add their own hardware/software modules to capture or process the video. The video input to the TeraVision servers is not restricted to capture hardware and can come from video cameras, medical imaging hardware, video devices emulated in software etc. Though the users will have to write the plug-ins to interface the hardware/software with the TeraVision engine. The compression modules and network protocols are also plug-able. So if you have a better way to do streaming, just plug it in.What network protocols are supported? Currently the system supports TCP, UDP and IP Multicast. Plans are underway to incorporate EVL's RBUDP (Reliable Blast UDP). As mentioned above, the system provides a framework to incorporate new protocols easily. The focus so far has been on protocols useful for streaming video over LFNs (Long Fat Networks). So more work has gone into developing the UDP and multicast modules.What is the upper limit of the pixels that I can stream using TeraVision !! Theoretically, none !! TeraVision is also scalable. If the video data is too heavy to be handled by one TeraVision server, multiple servers can be added and synchronized to act as one giant capture card. On the display end, the clients can take multiple video streams from the servers and stich them together to show as one. Currently in one of the configurations, one can stream the output of one tiled-display to another, where the source and destination are made up of different number of tiles. Even the resolutions can be different per tile. Thus its possible to stream the outputs of graphics cluster, potentially anywhere; even to your laptop.Wow !! Cool !! So can TeraVision cook my dinner and drive my kids to school too. Not yet. But we plan to keep adding new features in upcoming versions soon. So you never know :) |

|