SAGE: Building a Visualization Cluster with Rocks

November 15th, 2006 - February 15th, 2007

Categories: Devices, Software, Visualization

About

The following is a excerpt of an article from the November 15, 2006 edition of HPC Wire.

To view the full text article, please click on the link below

Building a Visualization Cluster with Rocks

by Steve Jones, Lee Shunn, and Tim McIntire

As high performance computing (HPC) becomes a ubiquitous part of the scientific computing landscape, the science of visualizing HPC datasets has become a critical field of its own. One of the hottest solutions can be found in commoditized high performance visualization clusters (HPVC), which are just starting to pop up in data rich environments around the world.

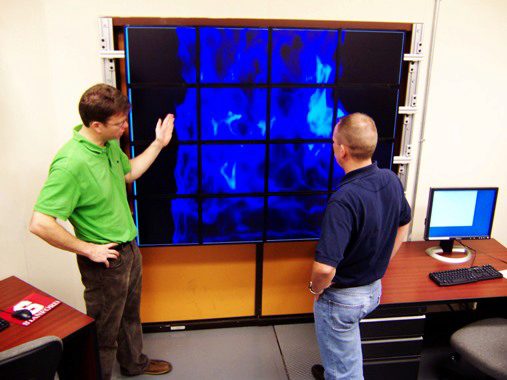

The widespread adoption of HPC has allowed scientists to process massive multivariate datasets that generate so much data on the output end that the subsequent analysis becomes unwieldy, or in some cases, impossible. These datasets have given rise to great advances in the science of visualization, pushing the limits of traditional workstations beyond their capacity. The only way to visualize this type of output in full-resolution is by building an HPVC that uses a multi-CPU, multi-GPU, multi-display solution to achieve resolution and rendering performance well beyond that of single-system solutions.

This article will take you through each step of building a high performance visualization cluster with a tiled wall display using the Rocks cluster distribution (Rocks Cluster Group, University of California, San Diego), SAGE (Cavern Group at the EVL, University Illinois at Chicago), and Rocks Rolls (from Clustercorp) that integrate commercial CFD applications into the cluster. I/O demands are solved by use of the Panasas file system. The datasets used in this article are from a 65,000 processor run on Blue Gene/L (Lawrence Livermore National Laboratory), with a grid containing 34 billion cells. The visualization cluster is built using Dell, Panasas and Cisco hardware.