MS Thesis Defense Announcement: “Exploring Deep Learning Techniques for Real-time Graphics”

May 4th, 2018

Categories: Applications, MS / PhD Thesis, Software, Video Games, Deep Learning

About

Data &Time: Friday, May 4th, 2018, 11 am - 1pm

Location: Room 3036 ERF

Defense committee:

Angus G. Forbes (Chair and Advisor)

Andrew Johnson

G. Elisabeta Marai

Abstract:

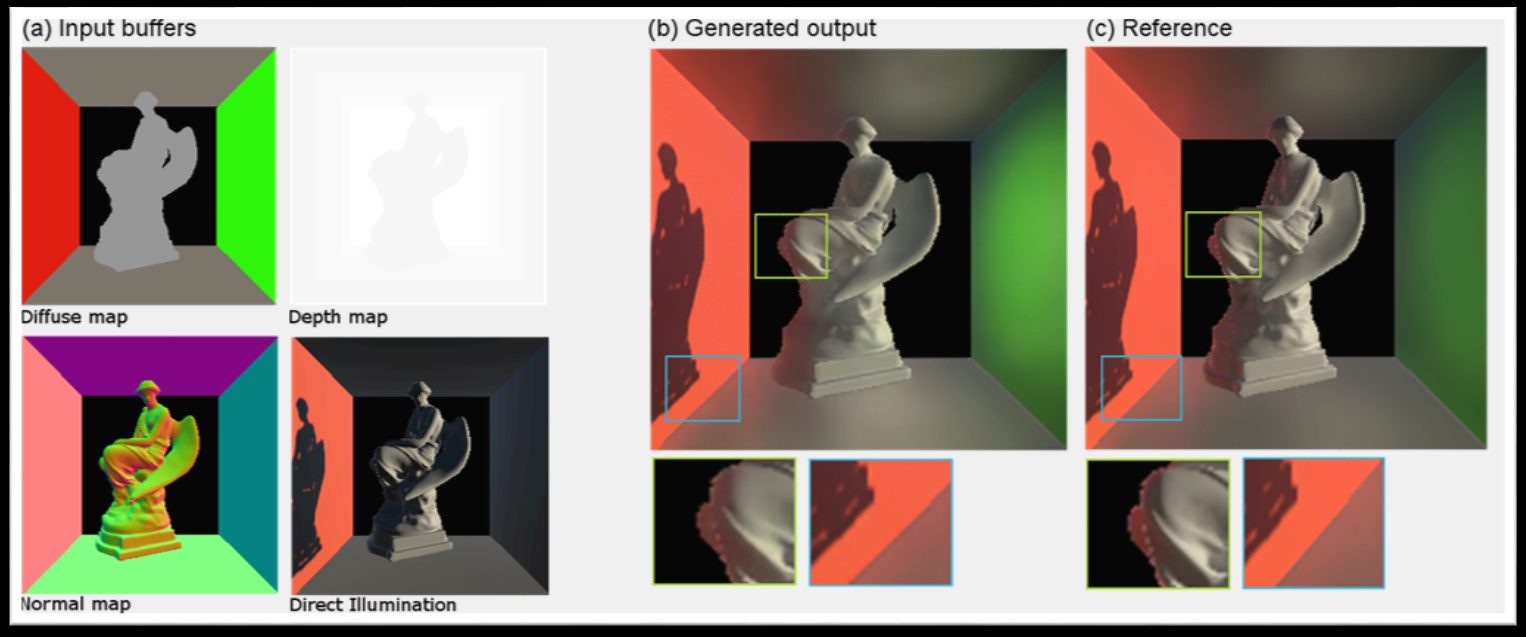

Simulating real-life light in a virtual world is computationally very expensive even in a limited capacity. The quality of images synthesized using computer graphics algorithms for offline rendering used in movies has increased significantly over the years. Real-time applications such as games still rely on non-physics based approximation methods for computing light to meet the time budget to render a frame. The demand for higher quality graphics for more realism in games is high which requires a better approximation technique. Acceleration data structures are used for speed up the rendering process by skipping the unwanted parts of the virtual scene for light calculations. Even with such structures rendering can take a very long time depending on the size of the 3D scene. Recently, deep learning approaches have shown great success in image processing problems. Although neural networks are extensively used for training AI agents and other parts of a game, only a handful of research exists in the 3D rendering space.

In this work, I explore deep learning based approaches to 1) approximate indirect illumination and soft shadow from image space buffers, and 2) build acceleration structure for faster rendering. I also show evaluations of these networks to get insights about their performance and limitations.