Preliminary Examination Announcement: “Towards a Natural Language Interface for Exploring Data Visualization”

September 27th, 2019

Categories: Applications, Visualization, Natural Language Processing, Visual Analytics, Visual Informatics

About

PhD Student: Abhinav Kumar

Date: September 27, 2019

Time: 1:00 PM to 3:00 PM

Location: Room 2068, Engineering Research Facility

Committee:

Barbara Di Eugenio (Chair and Advisor)

Andrew Johnson

Natalie Parde

Cornelia Caragea

Kallirroi Georgila

Abstract:

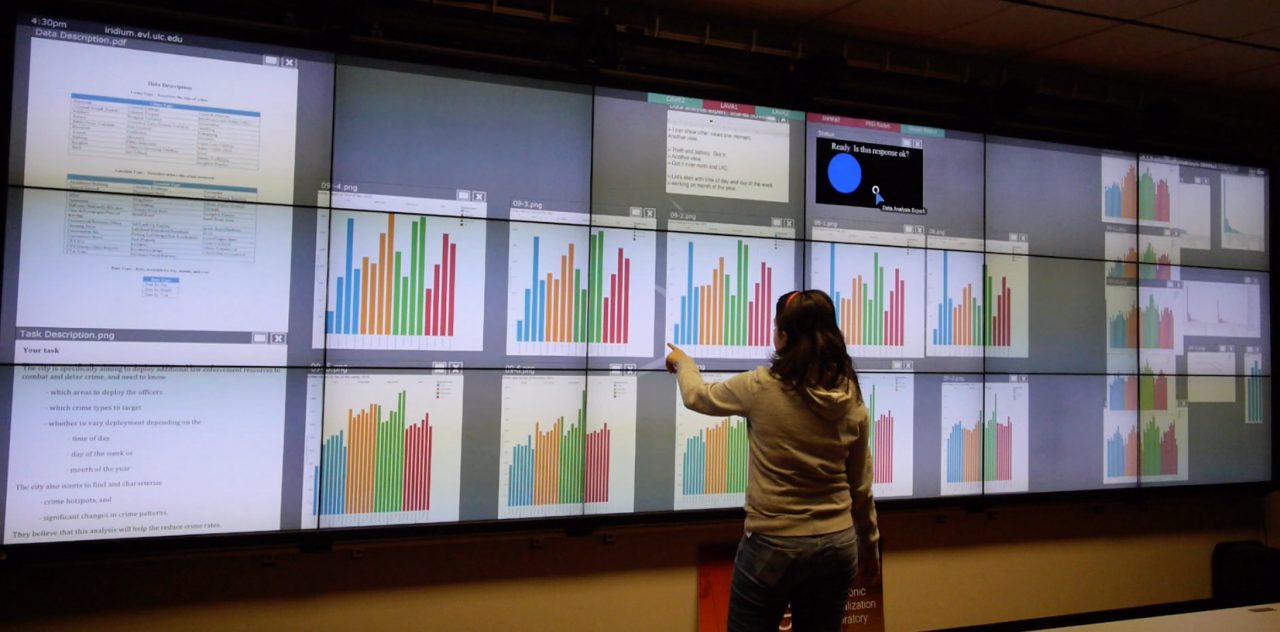

When exploring data visualizations, users that are novices to visualization, oftentimes struggle with translating their particular queries into an effective visual representation, which this research aims to address by automatically constructing and managing visualizations appropriately. I am particularly focusing on the language processing, which is challenging because spoken dialogue is oftentimes under-specified, leading to open interpretation and in our case potentially the wrong visualization. My main contributions are as follows. First, I am developing language processing in the multimodal setting. I worked with others in collecting and annotating a corpus of dialogue data, comprising of speech text and hand gestures, that came from data collected via human experiments. Second, I used a local context for building a module for interpreting the underlying intent of what the user says. Third, given the small size of our dataset, I augmented it using paraphrasing and showed that the intent interpretation module improved on the augmented data. As part of my proposed work, I will work on text segmentation to identify the boundaries of the local context that my current intent interpretation module operates on. I also plan to develop a model that captures references made through language and hand pointing gestures and determines the appropriate target visualizations for them.