Articulate - PhD Prelim Presentation, Yiwen Sun

March 15th, 2010

Categories: Applications, Human Factors, MS / PhD Thesis, Software, Visualization

About

Date: Monday, 3/15/2010

Time: 12:15 PM CDT

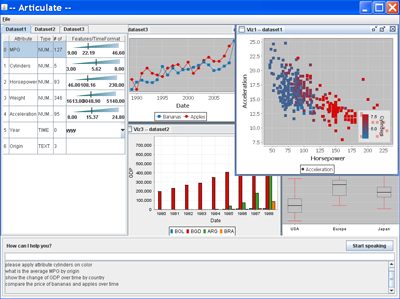

EVL PhD candidate Yiwen Sun presents her current research and thesis preliminary Monday, March 15th at the Electronic Visualization Laboratory Cybercommons. Sun’s advanced visualization research “Articulate: a Semi-automated System for Translating Natural Language Queries into Meaningful Visualizations” focuses on using natural language processing and machine learning methods as a means for user-interface control over a visualization system.

While many visualization tools exist that offer sophisticated functions for charting complex data, they still expect users to possess a high degree of expertise in wielding the tools to create an effective visualization. I propose Articulate, a semi-automated visual analytic system that is guided by a conversational user interface to allow users to verbally describe and then manipulate what they want to see. Natural language processing and machine learning methods are used to translate the imprecise sentences into explicit expressions, and then a heuristic graph generation algorithm is applied to create a suitable visualization. The goal is to relieve the user of the burden of having to learn a complex user-interface in order to craft a visualization.