Articulate: Creating Meaningful Visualizations from Natural Language

March 18th, 2012

Categories: Applications, MS / PhD Thesis, Software, Visualization

Authors

Sun, Y.About

While many visualization tools exist that offer sophisticated functions for charting complex data, synthesizing various information and deriving insight from abstract data, they still expect users to possess a high degree of expertise in wielding the tools to create an effective visualization. Unfortunately the users of such tools are usually lay people or domain experts with marginal knowledge of visualization techniques. These users typically can’t make use of the latest advances in visualization tools because of the high learning curve, instead they want to focus on the data, and often end up resorting to traditional bar chart or spreadsheet. To facilitate the use of the advanced visualization tools, I propose an automated visualization framework: Articulate. The goal is to provide a streamlined experience to non-expert users, allowing them to focus on using the visualizations effectively to generate new findings.

The 2008 National Science Foundation workshop report on “Enabling Science Discoveries through Visual Exploration” also noted “there is a strong desire for conversational interfaces that facilitate a more natural means of interacting with science.” Even a decade ago this would have seemed far-fetched, but today there is renewed interest in the use of natural language as an interface to computing, with a variety of successful models that are able to understand the meaning of verbal descriptions in search engine, recommender system, educational technology and health applications. This inspired me to adopt a natural language interface in the automated visualization framework which would allow an end-user to pose verbal inquiries, and then let the system assume the burden of determining the appropriate graph, and presenting the results. It is hoped that such a capability can potentially reduce the learning curve necessary for effective use of visualization tools, and thereby expand the population of users who can successfully conduct visual analysis.

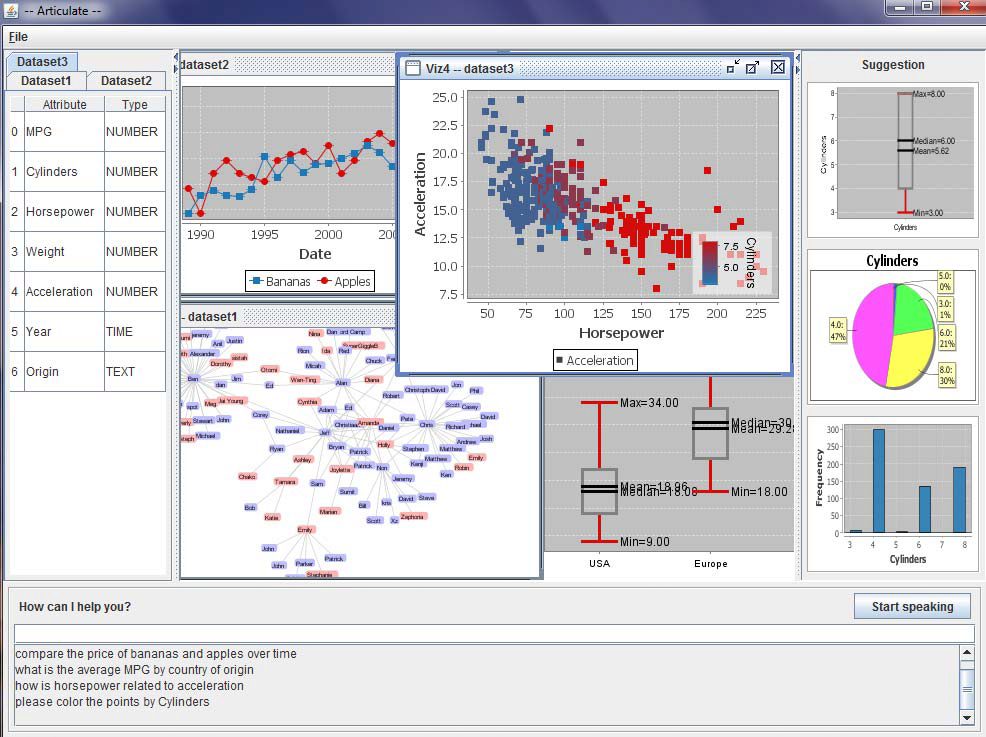

In this dissertation I present Articulate, an approach toward enabling non-visualization experts to leverage advanced visualization techniques through the use of natural language as the primary interface for crafting visualizations. The main challenge in this research is in determining how to translate imprecise verbal queries into precise and meaningful visualizations. In brief, the process involves three main steps: first, extracting syntactic and semantic information from a verbal query; then applying a supervised learning algorithm to automatically translate the user’s intention into explicit expressions; and finally, selecting a suitable visualization to depict the expression. While the initial prototype produces information visualizations, it will also be demonstrated how the approach can be applied to scientific visualization as well as the details of the user studies that validate the approach.

It is important to understand that the goal of this research is to explore how to automatically translate natural and potentially ill-defined language into meaningful visualizations of data in a generalizable way, in order to relieve the user of the burden of having to learn how to use a complex interface, so that they can focus on articulating better scientific questions in data exploration with the modern advances of visualization.

Resources

Citation

Sun, Y., Articulate: Creating Meaningful Visualizations from Natural Language, Submitted as partial fulfillment of the requirements of the degree of Doctor of Philosophy in Computer Science, University of Illinois at Chicago, Graduate College, March 18th, 2012.